Learning from the Data — How We Trained and Served NLP Models at Scale

September 1, 2019 — Machine Learning Infrastructure

Table of Contents

- Introduction: Closing the Loop

- Streaming Inference Logs as Training Data

- Training Pipelines with Spark

- Topic Modeling with LDA

- Serving Models with BigDL

- Testing and Regression Harnesses

- Final Reflections

- Acknowledgments

← Read Post 2: Infrastructure Meets Intelligence — Serving Real-Time NLP in Memonia

1. Introduction: Closing the Loop

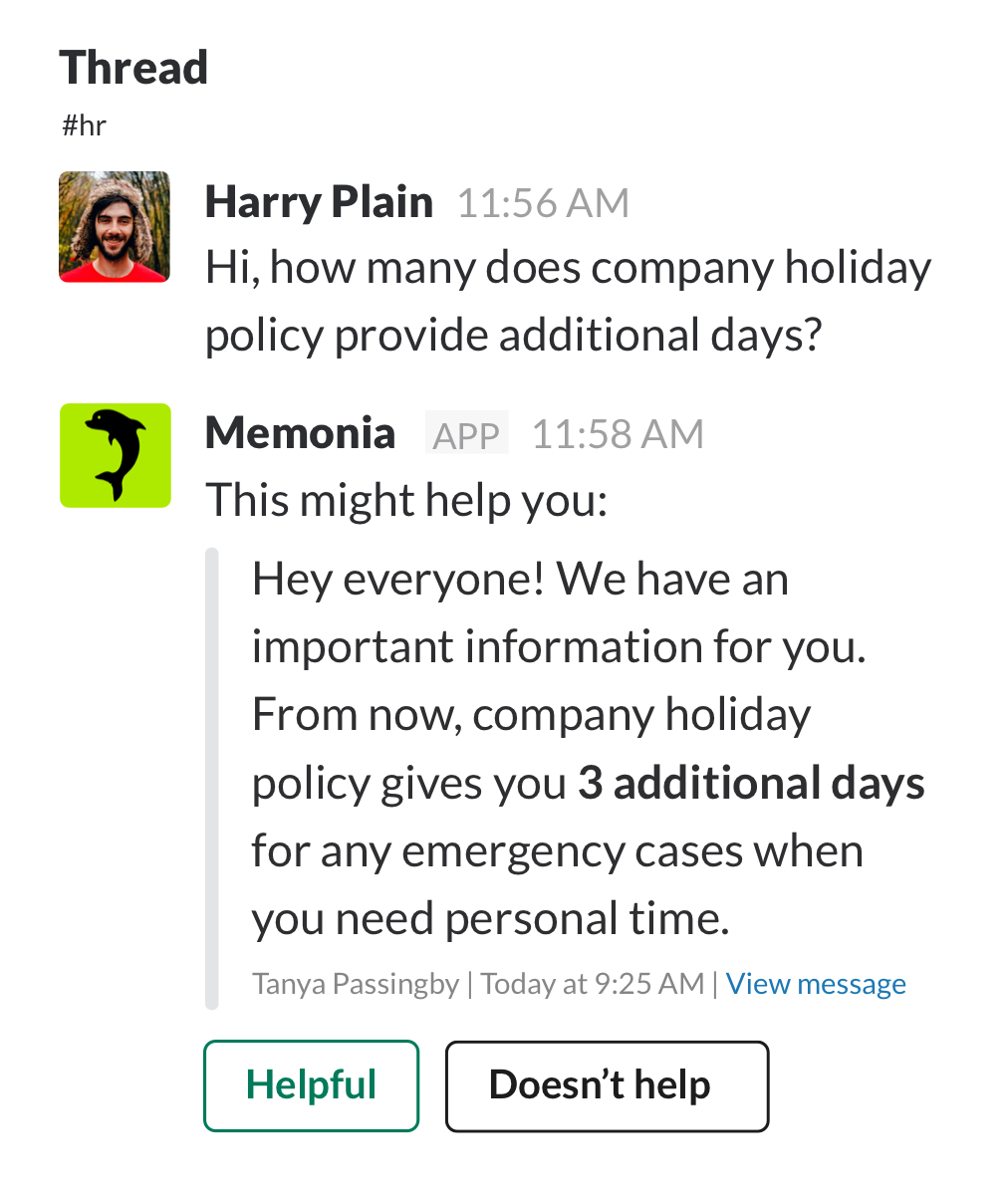

With Memonia live inside Slack, our models were making real-time predictions. But to improve them, we needed to learn from the data we were already processing.

This post is about how we:

- Captured inference logs from Slack messages

- Built continuous training datasets

- Used Spark and BigDL to train and serve models

- Validated quality using automated regression tests

By mid 2019, we had a fully operational ML loop running across Slack threads, Pub/Sub streams, and Spark clusters.

2. Streaming Inference Logs as Training Data

Rather than labeling new examples from scratch, we mined our existing message traffic.

Each NLP service (genre, question-type, QA) emitted structured logs:

- Input message

- Predicted label or span

- Confidence scores

- Slack context (thread, timestamp, user ID)

These logs were captured in Pub/Sub and written to GCS in Parquet format. We partitioned logs hourly and used them for batch processing and dataset staging.

This gave us:

- Fresh examples with real-world ambiguity

- Cases where models disagreed or were uncertain

- A reliable source of future training material

3. Training Pipelines with Spark

We used Spark Structured Streaming for all major ETL jobs. Each job:

- Read hourly inference logs from GCS

- Applied filters (e.g., prediction confidence, thread length)

- Extracted features using CountVectorizer, n-grams, and token filters

- Wrote new datasets as Parquet files for later use

For classification tasks (genre/type), we trained:

- Logistic regression

- Naive Bayes

- Feedforward neural networks (via BigDL)

Training jobs ran on preemptible VMs and were typically triggered weekly. Spark jobs could be launched locally, on Dataproc, or via Kubernetes using a simple submit script.

4. Topic Modeling with LDA

To better understand what people asked in Slack, we applied Latent Dirichlet Allocation (LDA) to our Slack messages.

Steps:

- Cleaned and tokenized message text

- Applied CountVectorizer

- Trained LDA models using Spark MLlib

The results surfaced clusters like:

- “Build issues,” “environment config,” “merge conflicts”

- “VPN/login problems,” “Confluence links,” “vendor onboarding”

We used these to:

- Label unlabeled threads

- Validate genre assignments

- Build dashboards showing topic distributions over time

5. Serving Models with BigDL

Our production models were exported from training scripts and served via Spark Streaming and BigDL-based jobs.

We used:

NeuralNetworkModelServingStreamJobto load models into memory- Pub/Sub as the event trigger for new messages

- JSON output published downstream to inference consumers

Features:

- GPU acceleration when available

- Automatic model reloading from GCS

- Modular scoring logic per task

We prioritized models that were:

- Interpretable (rule-based fallback paths)

- Lightweight enough for Slack latency constraints (<1s)

- Easily updated without downtime

6. Testing and Regression Harnesses

Every NLP model in production had a matching test harness:

- Postman collections for input/output checks

- Newman scripts for automated regression runs

- CSV-based test cases for key phrases or edge cases

Example: a test might verify that “How do I generate a report?” always returns question + general.

Each model was also evaluated offline:

- Precision/recall against labeled sets

- Confusion matrix plots

- Category-level breakdowns (e.g., “most confused with smalltalk”)

This allowed us to ship updates with confidence — and roll back quickly if needed.

7. Final Reflections

Memonia’s training loop was scrappy but powerful:

- Spark gave us scalable feature pipelines

- Pub/Sub made logging and serving modular

- Flat files kept everything transparent and reproducible

We didn’t need massive infrastructure — just careful design, clean datasets, and tools that worked well together.

Memonia could learn from your team the way your team learned from itself. It was memory, deployed as infrastructure.

The service was launched on July 26th, 2019 (Product Hunt).

8. Acknowledgments

Special thanks to Abdulla Abdurakhmanov, the software engineer and system architect who designed and implemented the entire streaming inference pipeline. While I focused on dataset creation, labeling strategy, and model training, their work ensured everything ran smoothly — from Slack message ingestion to real-time predictions and system orchestration.