Infrastructure Meets Intelligence — Serving Real-Time NLP in Memonia

August 5, 2019 — Engineering

Table of Contents

- Introduction: From Datasets to Real-Time Decisions

- Architecture Overview: Events, Services, and Streams

- NLP Services in Production

- Slackbot Integration and API Routing

- Building Searchable Knowledge Base

- Deployment and Observability

- What We Learned

1. Introduction: From Datasets to Real-Time Decisions

After curating labeled datasets and building reliable classifiers (see Post 1), we turned our focus to infrastructure. How could we serve predictions, detect message types on the fly, and surface relevant answers — all without leaving Slack?

Our answer was a set of modular, message-driven NLP microservices, stitched together with Scala, Akka HTTP, and Google Pub/Sub. This post walks through how we turned our early datasets into a production-ready platform that powered Memonia’s Slack-native experience.

2. Architecture Overview: Events, Services, and Streams

The Memonia stack followed a loosely coupled service design. Key components included:

- A Slackbot that captured messages and triggered downstream processing

- A series of NLP microservices for genre detection, question classification, and reading comprehension

- A Pub/Sub pipeline for event propagation and async scoring

- A search layer backed by Elasticsearch and RocksDB

- Optional HTTP APIs for internal queries and diagnostics

This model let us support both streaming inference (for Slack messages) and batch inference (for historical data or training), depending on the message path.

3. NLP Services in Production

Each NLP task was served by a dedicated microservice, written in Scala using Akka HTTP or Akka Streams:

- Genre Detection: classified messages into types like

question,solution,article, orsmalltalk - Question Type Classification: determined if a question was

generalorcontext-based - Reading Comprehension: used a span-extraction model (fine-tuned BERT) to answer questions by selecting spans from prior messages

Services could operate in two modes:

- HTTP mode for on-demand queries (used during manual testing or Slackbot requests)

- Pub/Sub mode for continuous ingestion from Slack events

Each service used a consistent payload structure and published results to a shared inference topic, enabling downstream consumers to act immediately.

4. Slackbot Integration and API Routing

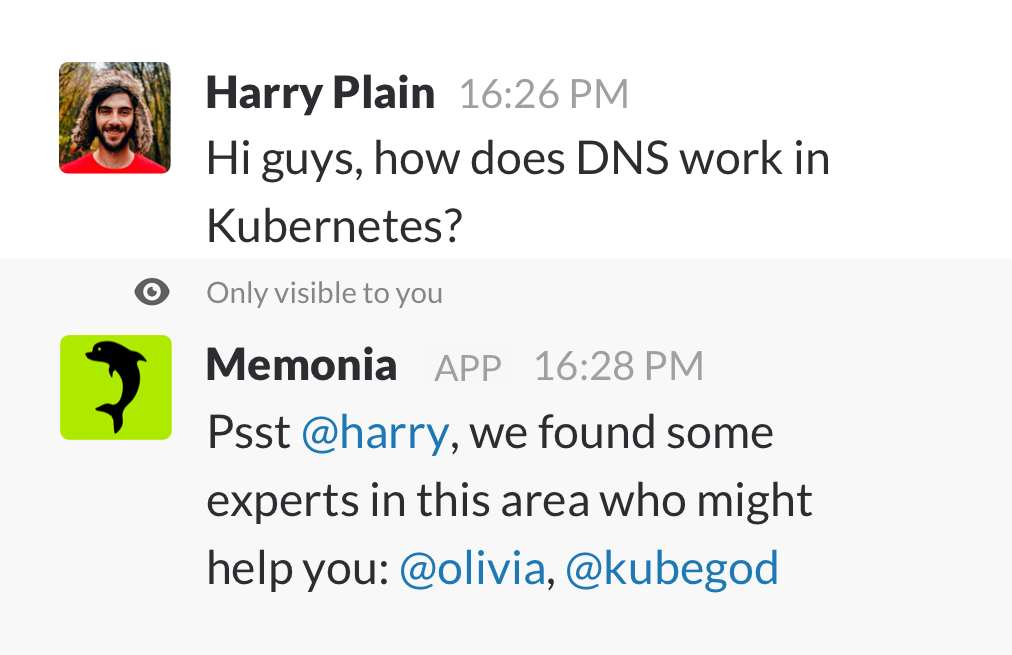

Memonia’s Slackbot was more than a passive listener — it was a smart router and response agent.

When a message arrived, the bot could:

- Push the event to the NLP dispatcher

- Collect genre/type predictions

- Fetch related answers from the knowledge store

- Suggest teammates or follow-up actions

The bot was stateless; its behavior was powered entirely by service responses. It used the Slack Events API and responded via chat.postMessage or thread replies. Thread context and user mentions were preserved for clarity.

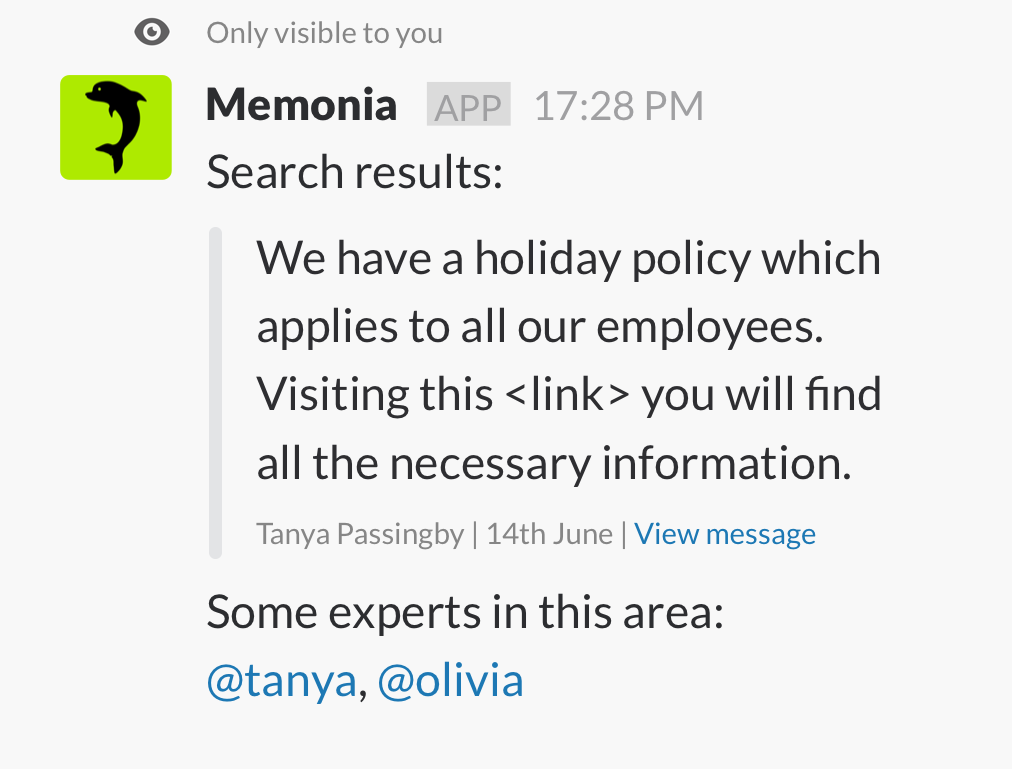

When triggered manually (e.g., “what is Memonia?”), the bot queried search and summarization endpoints, formatting answers for in-channel display.

5. Building a Searchable Knowledge Base

The other half of Memonia was its memory.

We indexed structured knowledge from:

- Slack Q&A pairs

- Curated answers

- Linked corpora (e.g., Confluence, Google Drive snippets)

The knowledge index supported:

- Keyword search (Elasticsearch, using Elastic4s)

- Semantic search (based on vector similarity)

- Entity extraction and deduplication

The data model used RocksDB for low-latency lookups and Elasticsearch for fuzzy matches. Each entry stored the full Slack thread, extracted question/answer spans, and confidence scores.

Queries returned:

- A match score

- An optional reference to a prior question

- A suggested reply template, editable before sending

6. Deployment and Observability

All services were packaged with SBT and published via pushRegistry. Containers were deployed on Kubernetes using deployment.yaml and service.yaml manifests.

Key features:

- JVM-based services with Akka HTTP

- Kubernetes readiness/liveness probes based on

/checkendpoints - TLS termination via PKCS12 keystore (when enabled)

- Frontend served via

memonia-web-appusing Scala.js and Twirl templates

We used fast-redeploy-on-kubernetes.sh to push updates quickly, and Pub/Sub to decouple traffic between services.

Logging and monitoring were kept simple:

- JSON logs to stdout

- Custom counters for success/failure

- Regression test harnesses (Postman + Newman) to verify NLP behavior weekly

7. What We Learned

Building Memonia’s infrastructure taught us:

- Stream-first architectures simplify Slack integration

- Small, task-specific services scale better than monoliths

- Even minimal labeling can support real-time intelligence if carefully balanced

- Pub/Sub is a great tool for chaining inference without bottlenecks

Next up: how we trained the models that powered all of this — including how we bootstrapped inference logs, streamed features with Spark, and scaled serving with BigDL.

← Read Post 3 – Learning from the Data — How We Trained and Served NLP Models at Scale