Productionizing SAM Segmentation: A GPU-Backed Async API on Google Compute Engine

December 22, 2023 — Engineering MLOps Computer Vision

Training a model is only part of the challenge.

Making it run reliably in a real system — handling GPU workloads, large models, and slow inference — introduces an entirely different set of constraints.This post explores how a segmentation pipeline was turned into a cloud‑based API capable of processing images asynchronously on a GPU.

Post 1 covered the experimentation arc (dataset, U-Net baselines, SAM pivot). This post is the engineering arc: wrap SAM inference behind an API, run it reliably on a GPU VM, and persist inputs/outputs in cloud storage—without timing out clients.

Table of Contents

- From Model to Service

- Service Architecture

- End-to-end flow: upload → async processing → polling

- Operational constraints: GPU memory, containers, and dynamic weights

- From masks to measurements

- Deployment Environment

From Model to Service

The goal of the service is straightforward:

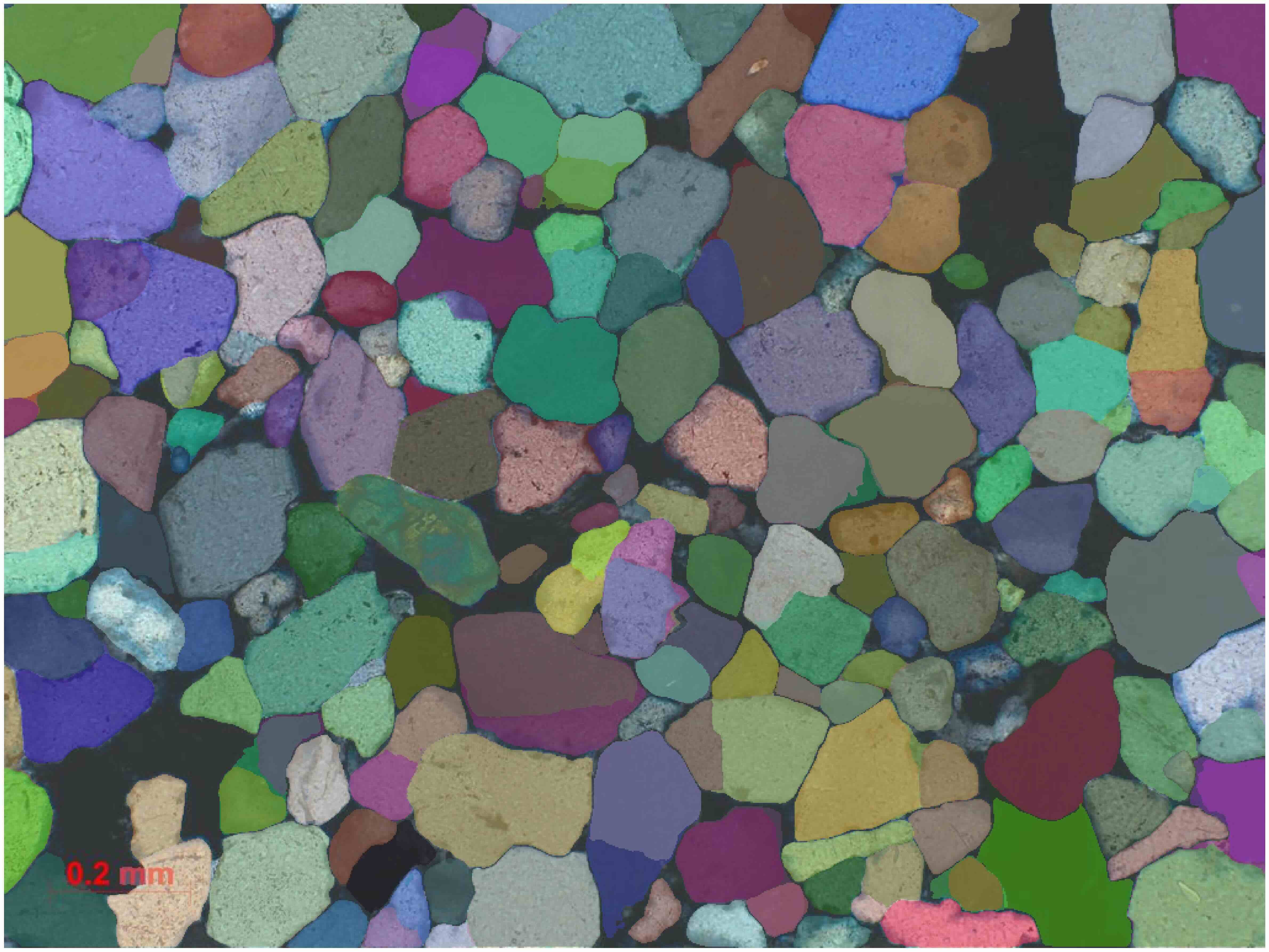

- accept a microscope image

- segment grains using the SAM model

- compute geometric measurements

- return results as structured JSON

At a high level the system performs:

Image → Segmentation → Mask → Measurement → JSON

Many machine‑learning models are computationally expensive.

SAM inference in particular:

- uses large model weights

- runs on GPUs

- may take seconds per image

However typical HTTP requests expect quick responses.

This mismatch often leads to timeouts or unstable services if not addressed carefully.

So the service implements a simple but effective pipeline:

- Segmentation: SAM (ViT-H) generates grain masks on a GPU

- Measurements: per-mask features are computed (e.g., area, equivalent diameter, axis lengths, orientation)

- Persistence: inputs and outputs are stored in Google Cloud Storage (GCS)

Service Architecture

The deployed system combines several components:

- Flask — REST API server

- PyTorch — running SAM inference

- scikit‑image — extracting geometric measurements

- Google Cloud Storage (GCS) — storing inputs and results

Engineering Decision

Using Object Storage as System State

Instead of storing intermediate results in memory or a traditional database, the system stores data in Google Cloud Storage.

Stored objects include:

- uploaded images

- generated analysis results

Object storage simplifies the architecture considerably.

The API itself can remain largely stateless, while persistent data lives in cloud storage.

Running SAM synchronously in an HTTP request quickly leads to timeouts.

The service therefore uses an upload‑and‑poll pattern.

That design makes the service effectively stateless at the HTTP layer: if a result file exists, return it; if it doesn’t, processing is still running.

End-to-end flow: upload → async processing → polling

Running SAM with a ViT-H backbone is heavy. A synchronous HTTP call often hits timeouts before inference finishes.

The solution was an async/polling pattern that keeps the API responsive.

1) Upload (POST /upload)

- Accepts a Base64-encoded image

- Validates image size (limit 1024×1024) to reduce CUDA out-of-memory risk

- Uploads the image to GCS

- Spawns a background worker using

threading.Threadand returns immediately with animageId

Large images significantly increase GPU memory usage and can trigger CUDA out‑of‑memory errors.

This is where backend engineering and ML constraints meet: the request handler must be strict enough to protect the GPU runtime, but fast enough to be a good API citizen.

2) Background processing

The background thread runs the full analysis:

- Segmentation:

SamAutomaticMaskGeneratoridentifies distinct objects (grains) - Feature extraction: compute per-object metrics (see below)

- Result storage: serialize to JSON and upload to GCS

The client does not need to wait for this step to finish.

3) Polling for Results (GET /image-analysis)

The client polls using imageId:

- the service checks for the existence of the corresponding result file in GCS

- returns it when available

Client sends an image, receives an imageId immediately, then polls until the analysis JSON is available.

Operational constraints: GPU memory, containers, and dynamic weights

Running SAM in production introduces several operational challenges.

GPU memory management

Two realities showed up quickly:

- the SAM checkpoint is large (2.5GB+)

- high-resolution inference can trigger CUDA OOM

Operational mitigations:

- strict input size validation (max 1024px)

- a dynamic weight loading

Containerization for repeatability

Engineering Decision

Containerizing the runtime

The service is containerized with Docker, served with Gunicorn, and built on an nvidia/cuda base image.

This ensures compatibility between:

- CUDA drivers

- PyTorch

- GPU hardware

Dynamic weight loading

Instead of baking the (~2.5GB) checkpoint into the Docker image, the container downloads it from a dedicated GCS bucket on startup.

Benefits:

- much smaller container image (faster build/push)

- weights can be updated without rebuilding the container

Separating model weights from the container image keeps builds smaller and allows model updates without rebuilding the entire service image.

From masks to measurements

Segmentation is only valuable here if it becomes measurable parameters. After SAM generates object masks, the service computes per-grain properties using scikit-image and serializes them as JSON.

The extracted feature set includes:

- area & perimeter

- equivalent diameter

- major/minor axis lengths

- orientation (degrees)

This is one of the most satisfying parts of the stack: a modern foundation model provides masks, but the measurement layer remains transparent, stable, and easy to validate (classic region properties on binary masks).

{

"metadata": {

"imageId": "0c9184d6-d43f-4dde-9696-25b7c965415b",

"timestamp": 1703187383.3206377,

"processTime": 21.175991920999877

},

"segmentedObjects": [

{

"class": "quartz",

"mask": "...",

"properties": {

"boundingBox": {

"x": 774,

"y": 368,

"width": 42,

"height": 47

},

"area": 1543,

"perimeter": 152.81,

"equivalentDiameter": 44.32,

"orientation": -30.78,

"minorAxisLength": 38.11,

"majorAxisLength": 52.72

}

}

]

}

Deployment Environment

Engineering Decision

Using GCE instead of managed ML endpoints

Managed platforms such as Vertex AI were evaluated.

However a custom GCE deployment provided:

- more control over the runtime environment

- simpler GPU configuration

- easier container deployment

For long‑running inference services with large models, direct infrastructure control can simplify debugging and optimization.

Why GCE won for this workload:

- more control over the runtime and GPU resources

- better fit for a persistent container doing heavy inference

- straightforward deployment using container images

The deployment flow is standard for a containerized GCE service:

- build & push the Docker image

- run the container on a GPU VM with

--gpus all

Final Outcome

The resulting system reliably performs:

- grain segmentation with SAM

- automated geometric measurement extraction

- asynchronous image analysis through an API

The pipeline effectively converts microscope imagery into structured geological data that can support further analysis.

→ Back to Post 1: Automated Grain Segmentation for Rock Thin Sections: From U-Net Baselines to SAM